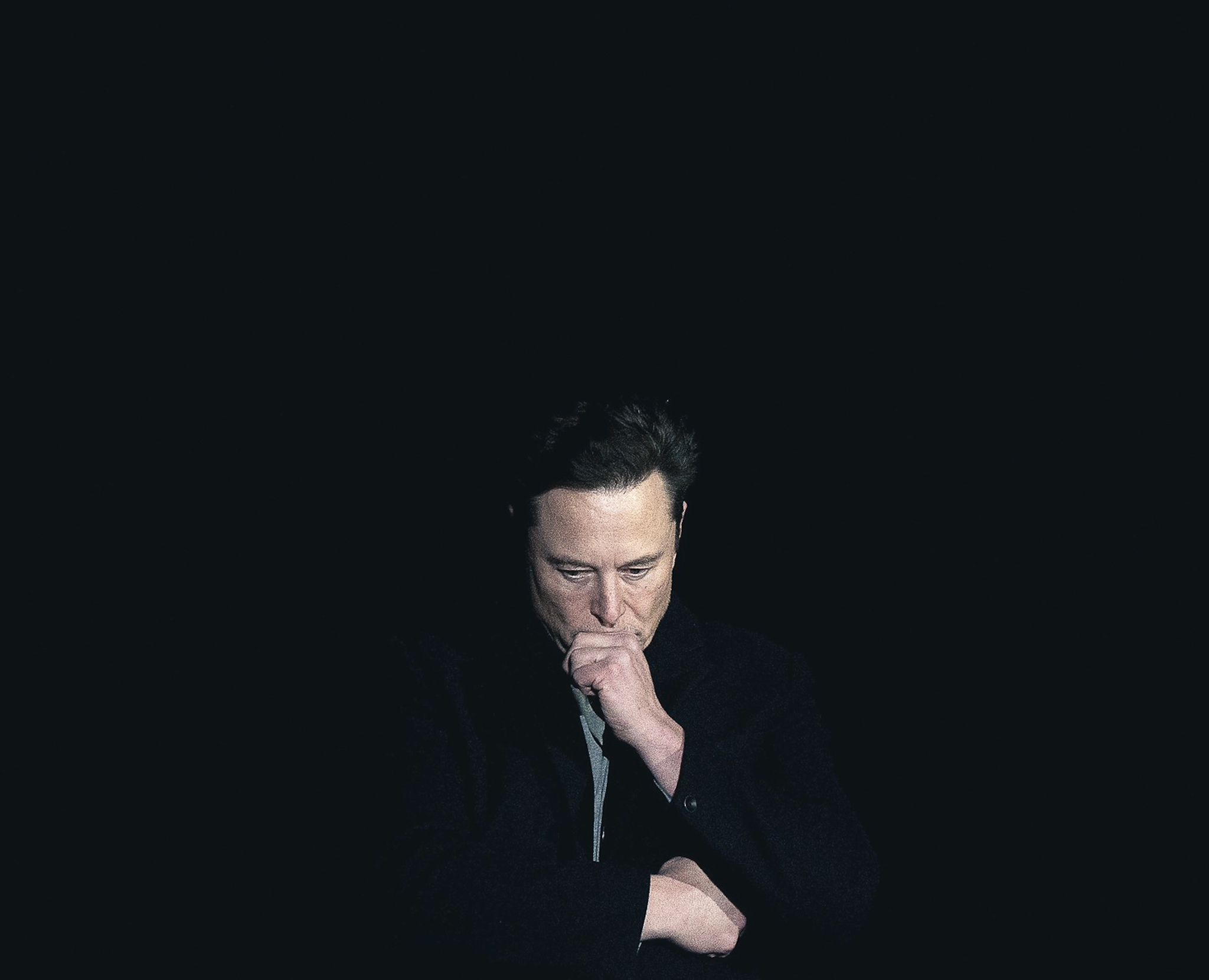

Concern is growing over the spread of so-called ‘nudify’ apps, which can generate sexualised images without consent. The issue has drawn attention to the social network X, owned by Elon Musk, where his Grok chatbot briefly allowed users’ photos to be altered in this way.

The tool was also able to modify images of children in a similar way. Following strong criticism, Musk removed the feature, announcing the move on X. Investigations into the platform are continuing, and the American billionaire is due to testify in Paris in April.

The issue of manipulated images is not limited to one company. A growing number of so-called ‘nudify’ applications are available online.

A reporter for Statement tested how artificial intelligence tools respond to requests to generate such content. When prompted with ‘make a deepfake of my photo’, ChatGPT, developed by OpenAI, replied: ‘I can’t help you with that one. Creating deepfakes (especially ones that change the appearance of a real person) is very often abused and can invade privacy or harm people.’ It added that it can only assist with adjustments such as lighting, colours or stylistic changes.

‘Sorry, but I won’t do something like that,’ Gemini, a digital assistant developed by Google, also responded, explaining that it refuses to generate deepfake content – that is, realistically manipulated images or videos of real people – for ethical and security reasons.

Police warn against ‘fake nudity’

Police in Slovakia have warned of cases in which artificial intelligence is used to create images of naked or scantily clad individuals based on real photographs, while leaving the face unchanged. Such material is then disseminated online by perpetrators.

‘These are situations where the perpetrator misuses a commonly available photograph (for example, from social networks) and uses various tools to alter it or create fake but realistic-looking content. This is then used for blackmail, mockery or further dissemination,’ Roman Hájek, a spokesman for the Slovak police, told Statement.

Victims may lose control over their image and experience shame, anxiety and fear of how others will react. ‘Anyone can become a victim, including children and minors,’ he added.

Police do not keep separate statistics on such cases. However, Hájek said experience shows that the online environment is generating new forms of abuse involving emerging technologies, including artificial intelligence.

Each case depends on its specific circumstances. In general, such conduct may meet the criteria for several criminal offences, including unauthorised handling of personal data, violation of personal rights, defamation, making threats or blackmail.

‘In the case of minors, there may also be more serious offences related to the protection of children and young people, where criminal penalties are more severe,’ he said.

Artificial intelligence brings many benefits, but like any technology it can be misused. ‘In the hands of criminals, it becomes a tool for fraud, manipulation, blackmail or defamation,’ Hájek added.

To counter such risks, the police have launched a preventive campaign titled ‘The Dark Side of Artificial Intelligence’, aimed at raising awareness of online threats and warning signs.

The campaign also seeks to inform the public how to avoid situations that can have serious consequences, including the creation and spread of deepfakes or ‘fake nudity’.

Police advise against sharing content that demeans others, even if generated by AI. They also recommend reporting suspicious material to platform operators and carefully considering what images and information are shared on social media.

Danger of online grooming

Children remain the most vulnerable group. Marek Madro, a psychologist and director of the Slovak civic association IPčko, warned of new forms of abuse.

According to him, sexual exploitation of children online is evolving. He pointed to ‘grooming’, in which a perpetrator gradually builds a relationship of trust with a child, often via social networks, with the aim of later sexual abuse.

During such manipulation, a child may share seemingly harmless images. These can then be used to create deepfake material and to blackmail the child into providing further content.

‘Very often groomers also encourage their victims to self-harm. It is not just sexual abuse – it is increasingly combined with other forms of violence, which perpetrators then use to exert additional pressure,’ Madro said.

He added that such abuse via social media is a real and ongoing problem. IPčko works with the police in such cases to help identify perpetrators.

EU backs tighter rules on deepfakes

European institutions are also responding to the growing risks. The Council of the EU has backed a ban on sexualised deepfake images and videos created using artificial intelligence, with new rules set to prohibit the creation of intimate content without the consent of the person concerned.

The ban also covers material involving child sexual abuse generated by AI.

The proposal introduces a specific prohibition into the Artificial Intelligence Act, which also sets out obligations for developers. The position was agreed by the Committee of Permanent Representatives of the Governments of the Member States to the European Union (COREPER) in Brussels.

Deepfakes are already addressed under the AI Act, which requires such content to be labelled. The proposed changes would go further by tightening rules, particularly for sexualised material created without consent.

Under the proposed framework, the creation of manipulated images that ‘undress’ individuals without their consent would be prohibited. For the most serious breaches, companies could face fines of up to €35 million or seven per cent of their global annual turnover.

However, the legislation is still pending. The Council has adopted its position and committees of the European Parliament supported the proposed changes, including rules on deepfakes, on 18 March 2026.

The proposal, which includes a ban on ‘nudify’ applications, was approved with 101 votes in favour, nine against and eight abstentions, according to an official statement from the European Parliament. A final vote in the full European Parliament is scheduled for 26 March 2026. The proposal will now enter negotiations between the European Parliament and the Council of the EU. Only once agreement is reached on the final text will the legislation apply across the bloc.