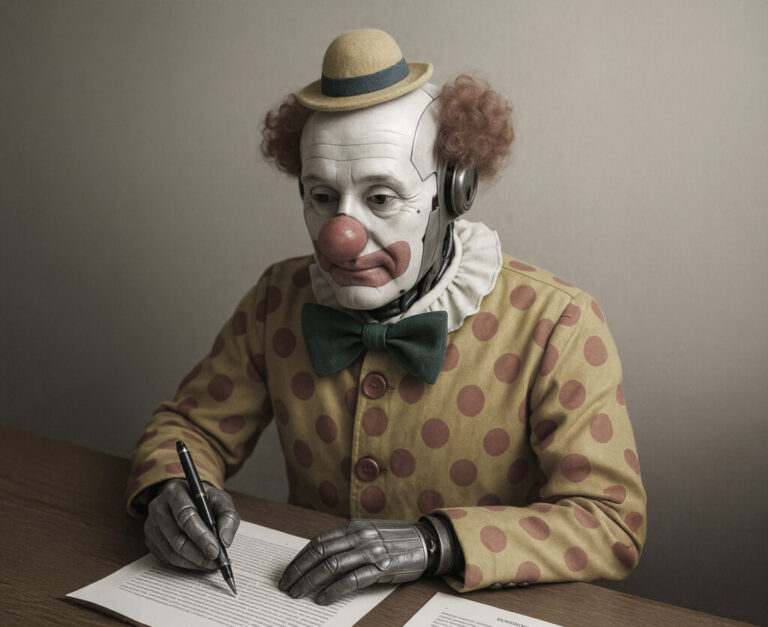

When Idiocracy premiered in 2006, director Mike Judge offered a dystopian comedy about a society dulled by automation and addicted to convenience. Nearly two decades later, the satire reads less like fiction and more like a roadmap. In Judge’s imagined future, bureaucracy is outsourced to vending machines and programs, agriculture is irrigated with energy drinks, and the presidency is held by a former wrestler draped in a flag; absurdities that now echo, uncomfortably, in the age of algorithmic governance and AI-powered assistants.

From Intelligence to Dumbness, a Desevolution Story

Apple’s "Personal Intelligence", for example, was marketed as revolutionary on-device, private AI, offering context-aware assistance. However, it's largely a rebranded Chat GPT like interface, requiring internet for most tasks like summarization or scheduling, offloading all of them to ChatGPT. The "private cloud" is just Apple's servers, and Siri's overhaul is still in preview. Simple tasks often fail, like scheduling or recalling context, sometimes resulting in hallucinations (e.g., rewriting an invitation as a resignation, mistaking a contact for a dog). Artificial intelligence, in essence, constitutes a rebranding effort, where Large Language Models are presented as intelligent entities despite their fundamental similarity to the more rudimentary models previously found in mobile phones, albeit with increased computational power. However, these models currently lack genuine cognitive ability.

AI isn't just a technical disappointment; it's negatively reshaping human cognition. An MIT study in 2025 found students using AI for essay writing showed worse neural engagement, memory, and linguistic quality, with brain scans revealing diminished prefrontal activation. A 2023 Stanford study showed automation bias led people to trust AI recommendations 30% more than human ones, even when incorrect, resulting in more errors and a 28% lower likelihood of questioning flawed AI outputs. The more we delegate to AI, the less capable we become of critical thought. As in Idiocracy, where machines serve a society that can no longer think, our machines are beginning to think for us, and we are forgetting how to doubt and think clearly. The future is idiocy through AI, not despite it.

Confident Hallucinations

In AI parlance, a “hallucination” is when a model generates content that is wrong, but sounds right. According to a 2024 study by Stanford’s Institute for Human-Centered AI, state-of-the-art legal models hallucinate between 58% and 82% of the time when answering domain-specific questions. This is not a defect, but rather a direct consequence of what is termed AI. As previously stated, the model does not embody intelligence; it is more accurately described as a language aggregator.

Another peer-reviewed study published in Royal Society Open Science found that AI chatbots oversimplify and misrepresent scientific findings—especially in medicine—leading to unsafe interpretations in roughly a third of responses. They’re structured hallucinations in the system, scalable errors in code now being embedded into healthcare, education, and justice.

Like in Idiocracy, the justice system is a televised cage match. In our version, it’s a ChatGPT model confidently citing non-existent court decisions or finding a cancer that you actually don’t have.

Business Strategy: Monetise the Dumb

This presents a lucrative business opportunity. People are increasingly trusting AI-generated content, even with its inherent flaws. And the use of AI is massive. The trend is already established, and the acceptance of AI, despite its imperfections, is evident.

Companies are not merely tolerating algorithmic mediocrity; they are actively reconfiguring their operations to embrace it. The rationale is clear: why incur the expense of human analysts producing limited memoranda when automated systems can generate hundreds? Why invest in editors training when AI can produce sufficiently fluent, albeit hallucinatory, content for search engine SEO optimization purposes? Furthermore, why engage journalists when artificial intelligence can deliver a hundred articles within a single day, regardless of their qualitative shortcomings?

This discernible trend suggests a prioritization of cost-efficiency over veracity, wherein the affordability of AI invariably triumphs over the expense of truth. Within the domain of content creation, AI-generated articles are saturating digital platforms. While a minority exhibit brilliance, the majority serve as mere clickbait. Journalism, consequently, has shifted its focus from verification to SEO engagement. AI tools are increasingly being developed to produce not just headlines but also "insights" tailored to pre-existing user preferences, resembling a digital parasitism.

Moreover, Microsoft's 2024 AI in Work Report indicates that 75% of knowledge workers now utilize AI tools on a weekly basis, with most exhibiting implicit trust. However, AI does not engage in genuine reasoning; it merely imitates. Consequently, humans, now mirroring these algorithmic outputs, are entering a reinforcing cycle of stylized irrationality—a veritable factory of plausible absurdity.

The world depicted in "Idiocracy" was not inadvertently unintelligent; its lack of intelligence was a deliberate design. Our current technological infrastructure has merely accelerated its realization.

Statement

Artificial intelligence was presented as a cognitive enhancement; however, it has evolved into a proficient mimicry. In pursuit of convenience, corporations have developed systems that articulate with academic fluency but reason with superficiality. The future does not depict a conflict between humanity and machinery, but rather a convergence where integrated systems exhibit hallucinations, flattery, and a substitution of critical thought with pre-defined patterns. Consequently, idiocracy manifests not as a dystopian scenario, but as a corporate operational strategy.