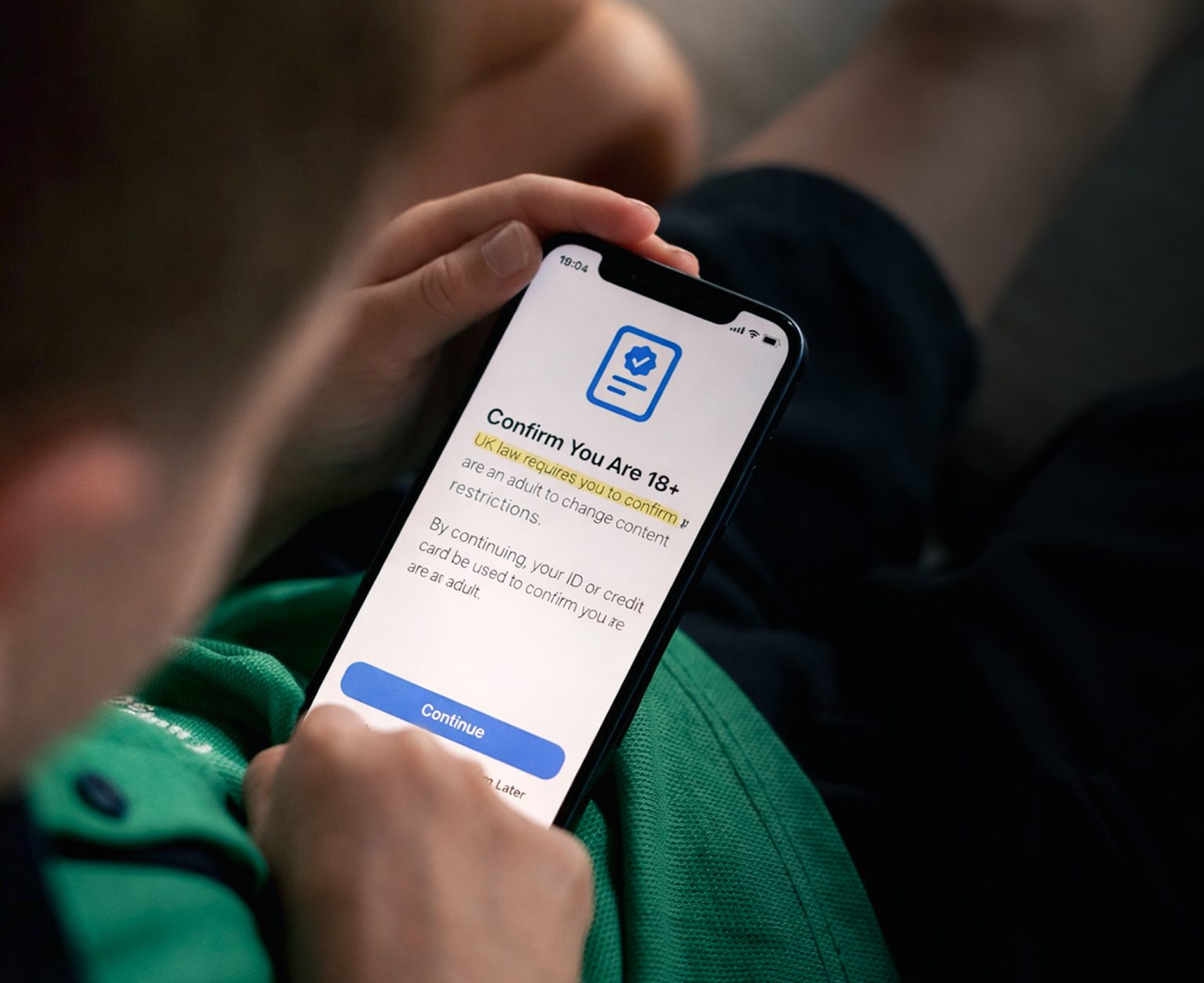

With the introduction of the new operating system iOS 26.4, Apple has implemented far-reaching age verification for its devices in the United Kingdom. After an update, users must prove they are at least 18, even on devices they already own. This can be done via a credit card, identity documents or existing payment data.

Those who refuse or fail to verify, not an uncommon outcome, are automatically placed into a restricted child-safety profile. What may at first glance appear to be a step towards greater protection for minors, and is naturally presented as such, is in reality part of a broader political and technological trend. Critics warn of surveillance that is penetrating ever deeper into digital systems.

Pressure from the state

The primary trigger for such measures in the United Kingdom is the British Online Safety Act. While Apple often tests new features on its home market in the United States, the United Kingdom has now become the key testing ground for the strictest security protocols. That shift is widely seen as a direct response to the Online Safety Act. Through the legislation, the British government is extending its reach into what was once described as the ‘Wild West’ of the internet. The law obliges platforms to protect minors more effectively from harmful content. Operating systems such as iOS are not formally covered.

Political pressure now appears to be so strong that companies such as Apple are acting pre-emptively, tightening their grip on users beyond what lawmakers explicitly require. Regulators such as Ofcom have explicitly welcomed the measures as a gain for children and families. In practice, that perspective proves far too narrow. Apple relies on several methods, including ID scans, credit card checks and algorithmic assessments of user accounts.

Suddenly locked out

Reports suggest that the system can even draw on existing payment data and account history to estimate a user’s age. That may sound efficient at first glance, but it creates two main problems. The first is system failure, as reported by users. Even older individuals have been unable to verify their age and have been locked out as a result. The second is a lack of transparency, as the way in which algorithms reach their decisions is not disclosed. The consequences can be severe and potentially costly. A device may become effectively unusable if age verification fails. That is particularly problematic when it is needed for professional use.

Critics see far more than a harmless protective measure in the development. Age verification always entails identity verification and therefore the collection of sensitive data. Past experience has shown how vulnerable such systems can be to data breaches. In recent years, identity data from verification systems have repeatedly been compromised.

To protect minors, all users, including adults, must disclose their identity. The data are then stored in systems that are more or less secure. The mere act of storage systematically undermines anonymity on the internet. A data breach can have serious personal and economic consequences.

Apple’s expanding data grip

Critics regard such measures as the foundation of a digital identity infrastructure. Apple is turning the iPhone into a form of identity checkpoint, where decisions are made about what content a user may access. At present, the decisive factor is the user’s age. Tomorrow, any number of additional criteria could be introduced. Even age control itself marks a shift in principle. Until now, responsibility for regulating what minors may see has largely rested with platforms or parents. That responsibility is now moving to the level of operating systems and thus to the underlying infrastructure of the internet.

Beyond those concerns, the effectiveness of such control remains contested. Studies suggest that age verification systems can often be circumvented, for instance through VPNs or false information. At the same time, unintended consequences may arise, including discrimination or the exclusion of certain user groups. In the United Kingdom, users are already beginning to switch to alternative devices or systems. That alone suggests that technical control measures rarely function in an absolute sense.

Well-intentioned, poorly executed

Apple’s age verification is another example of the growing regulation of the internet. The protection of minors is undoubtedly a legitimate concern. Yet the current implementation raises fundamental questions, particularly with regard to data protection. It is difficult to see how it can be proportionate to require all users to verify their identity. Oversight of the resulting data structures appears, at best, insufficient.

In families, devices and even user accounts are often shared across generations, something neither programmers nor politicians seem fully to grasp. If a child uses a parent’s computer, age verification at operating system level achieves very little. The excessive accumulation of data, however, still takes place.

The most pressing question, however, is who will protect users from the risk that the infrastructure now being created could be used, or permitted to be used, for entirely different purposes in future. Data protection and child protection must not cannibalise one another in such a manner. The current trajectory points precisely in that direction, as child protection is increasingly invoked to justify far-reaching encroachments on digital freedoms.

The real challenge is to find solutions that are both effective and data-minimising. Technologies such as anonymous age verification – so-called zero-knowledge methods, in which no sensitive data need be shared – already exist. Yet they have so far seen little practical application. Unless that changes, the debate over age verification, not only in the United Kingdom but worldwide, is likely to intensify further.